Allen Schmaltz

Computer Scientist

Founder, Reexpress AI, Inc.

Bio

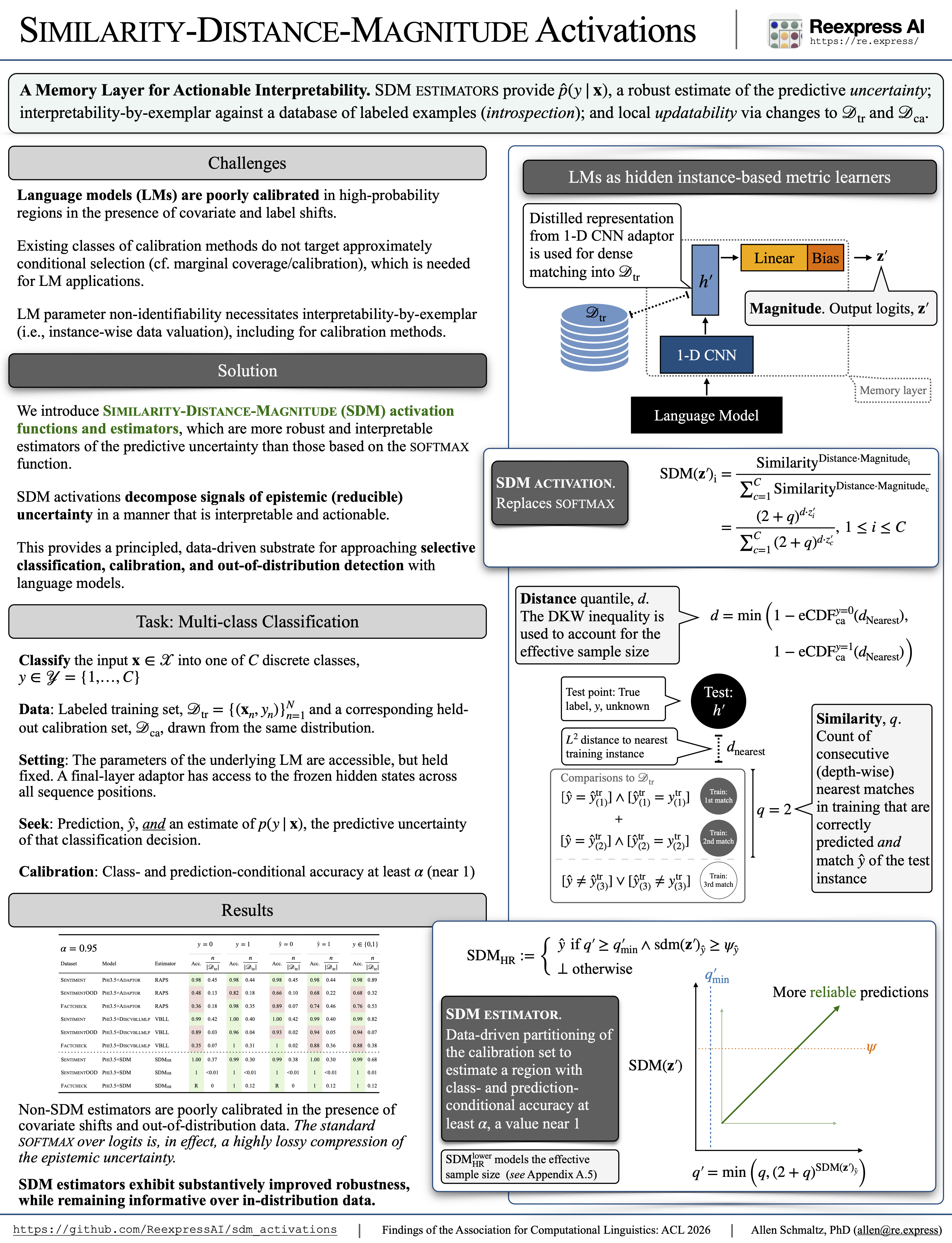

My work at Reexpress AI is focused on scaling Similarity-Distance-Magnitude (SDM) activation functions, SDM estimators, and SDM language models to build reliable, robust, and controllable AI systems.

This line of work is based on a novel decoupling of the sources of epistemic uncertainty for high-dimensional models via a new activation function that adds Similarity (i.e., correctly predicted depth-matches into training)-awareness and Distance-to-training-distribution-awareness to the existing output Magnitude (i.e., decision-boundary)-awareness of the softmax function. Conceptually this new function is:

\[\rm{SDM}(\mathbf{z})_i = \frac{ {\rm{Similarity}}^{\rm{Distance} \cdot \rm{Magnitude}_i} }{ \sum^C_{c=1} { {\rm{Similarity}}^{\rm{Distance} \cdot \rm{Magnitude}_c} } }\]with a corresponding negative log likelihood loss that takes into account the change of base.

This enables constructing robust estimators of the predictive uncertainty over models with non-identifiable parameters, such as neural networks, and by extension, building sequence prediction models with robust output verification and interpretability-by-exemplar as intrinsic properties.

This work reflects the evolution of, and lessons learned from, explainable and interpretable AI over the last decade, from early endogenous modeling of explanations with language models as classifiers (Schmaltz et al., 2016), to actionable notions of interpretability by analyzing neural networks as hidden instance-based metric learners (Schmaltz, 2021), and finally, to robust, interpretable estimators of the predictive uncertainty (Schmaltz, 2025).

A brief video overview of this line of work is available here.